Inspiring and/or scary?

Emotional tensions in student responses to formative feedback from chatbots at a master’s level course in counseling

Helle Merete Nodentoft, Danish School

of Education, Aarhus University ![]()

Tine Wirenfeldt Jensen, Danish School

of Education, Aarhus University ![]()

|

Abstract This study contributes to an emerging field of research on generative artificial intelligence (GAI) and feedback in higher education by examining how master's students attending a course in counseling respond to chatbots as a “relevant other” (Carless & Young, 2024) in formative feedback practices. Using a Bakhtinian (1981) dialogic framework and a longitudinal mixed-methods approach, we show three distinct student responses to chatbot voices: positive, skeptical, and struck. Our findings reveal students' emotional ambivalence and the tensions between trust/distrust and acceptance/rejection of chatbot voices. These tensions give rise to different understandings of what it means to be critical in an academic context, along with ambivalence between expecting “the right answers” from chatbots and engaging in a dialogical learning process. We argue that acknowledging these emotional tensions is crucial in realizing GAI's learning potential (Maurya & DeDiego, 2023) in both academic work and future counseling practice. |

|

Danish abstract Dette studie bidrager til aktuel forskning om generativ kunstig intelligens (GAI) og feedback på videregående uddannelser ved at undersøge, hvordan kandidatstuderende på et undervisningsforløb om vejledning forholder sig til chatbots som en 'relevant other' (Carless & Young, 2024) i formative feedbackprocesser. Med afsæt i Bakhtins (1981) dialogforståelse og et longitudinalt mixed-method studie viser vi tre forskellige måder, studerende reagerer på chatbot-stemmer: en positiv, en skeptisk og en 'ramt' respons. Vores analytiske fund viser de studerendes emotionelle ambivalens samt spændinger mellem tillid/mistro og accept/afvisning af chatbot-stemmer. Disse spændinger afføder forskellige forståelser af, hvad det betyder at være kritisk i en akademisk kontekst, samt en ambivalens mellem at forvente ”de rigtige svar” fra chatbots og at engagere sig i en dialogisk læringsproces. Vi argumenterer for, at anerkendelsen af disse emotionelle spændinger er afgørende for at realisere GAIs læringspotentiale (Maurya & DeDiego, 2023) i både akademisk arbejde og fremtidig vejledningspraksis. |

Introduction

Generative artificial intelligence (GAI) is currently impacting all areas of society, including counseling. As a result, individuals seeking counseling may have interacted with GAI in the form of chatbots before beginning a physical or online session. Therefore, professional counseling competencies should include an understanding of GAI and ensure familiarity with its use, enabling counselors to effectively engage with and guide clients in navigating this new reality. The article stems from the belief that GAI offers new ways to develop counselling competencies and practices. In the context of counselling education, Maurya demonstrates the significant potential of chatbots in client simulation role-play, allowing students to practice counselling skills (see for instance Maurya, 2023, p. 36; 2024). Building on this research, Maurya & DeDiego (2023) proposed a framework for integrating GAI in counselor education and supervision, suggesting ways to leverage chatbots for skill development and reflective practice. They advocate that counseling education programs incorporate comprehensive training in the responsible use of GAI technologies, emphasizing the development of ethical guidelines and specialized modules to ensure future practitioners are adequately prepared. These programs are crucial in cultivating ethical use of chatbots. Mauraya & DeDiego call for future studies to address the creation, execution, and assessment of such training initiatives as well as models for facilitating

”[...] meaningful and constructive learning experiences for CITs [counselors-in-training] in a technology-enhanced environment” (Maurya & DeDiego, 2023, p. 2).

To this end, we have introduced GAI in a course on counseling as part of a master’s degree program at Aarhus University in Denmark. Here, GAI functions as a feedback partner in students’ work with their home assignment. Inspired by the prompting technique "the persona pattern" (e.g. White et al., 2023), the chatbots are given specific roles, making it possible for students to interact with them and get feedback in different ways. Our understanding aligns with Carless & Young (Carless & Young, 2024) in viewing feedback as a dialogical process in which students interact with “relevant others” (p. 860) and in which:

“[...] …learners generate and make sense of performance relevant information and use it to develop their thinking or work” (Carless & Young, 2024, p. 858).

In this regard, de Kleijn (de Kleijn, 2023) and Molloy, Boud & Henderson (Molloy et al., 2020) have shown that a key student competence is developing feedback literacy and being able to seek feedback from a range of different sources.

Previous studies have explored various modes of synchronous and asynchronous peer feedback within the same course context as presented in this article. These studies revealed that the process of giving and receiving peer feedback can be both rewarding and emotionally charged (Nordentoft & Møller, 2020, 2022), as students often fear their self-perceived incompetence may be exposed to their peers. Moreover, findings from feedback research literature highlight the importance of timing as a critical factor in the feedback process (Carless, 2020). In this study, chatbots are seen as a potential “relevant other” (Carless & Young, 2024) that is available 24/7 in the context of feedback and learning at the counseling course. Moreover, feedback is mentioned as part of a broader, positive GAI discourse emphasizing the potential of personalized chatbot feedback to enhance student motivation and promote active learning (Lai et al., 2023). In this regard, research on GAI and feedback processes in higher education, including counseling education, represents a new and growing field of inquiry. However, a review of studies exploring the use of chatbots in higher education (McGrath et al., 2024) identified feedback primarily as a theme in relation to second language learning and a focus on how students perceive written tutor feedback versus chatbot feedback on their work (e.g. Escalante et al., 2023). Bruun et al. pointed to a general lack of research on chatbots in higher education that is founded in pedagogical and learning theory, calling for studies exploring how chatbots can be integrated in teaching, supervision, and formative feedback (Bruun et al., 2024). This exploratory study therefore contributes to the emerging body of research on how GAI can play a role in students’ feedback practices in higher education. In this article, we address the following research question:

What are the implications of the ways in which students respond to the introduction of chatbots as part of the formative feedback for their learning process in a master’s course in counseling?

First, we describe the learning design of the elective course "Counseling as Pedagogical and Institutional Practice” followed by a description of our theoretical and methodological approach in the analysis. After presenting our analysis, we summarize our observations in a model we call "the emotional ambivalence compass," capturing the challenges and learning potentials when working with chatbots in students' formative feedback processes. We conclude by outlining the study's main argument and findings.

Learning design

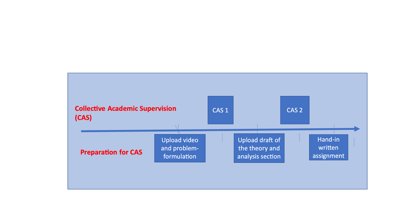

During the fall of 2023, we introduced GAI as part of the learning design for the elective course: "Counseling as Pedagogical and Institutional Practice” (10 ECTS) in the Department of Educational Science at Aarhus University. Use of GAI was not permitted at the university at the time, so we had to get special permission for this research project from the faculty management. Eighteen female students and one male student aged 20-25 from different master’s programs within the humanities attended the course, where they were presented with different theoretical and methodological approaches to counseling (McLeod, 2013). In the written exam assignment, students had to identify a counseling problem, justify the relevance of a particular counseling approach and analyze a counseling session they had recorded on video. In the video, which showcased the counseling session, the students themselves assumed the role of the counselor. Supervision and feedback consisted of two Collective Academic Supervision (CAS) sessions (Nordentoft et al., 2019; Nordentoft et al., 2013). Before the CAS sessions, students were divided into peer feedback groups and the sessions alternated between short presentations by the supervisor and dialogue among students in the peer feedback groups. Our learning goals for introducing GAI and chatbots as part of students' formative feedback process were:

· To facilitate knowledge and critical awareness of the potential and pitfalls of applying GAI in counseling practices

· To strengthen the understanding of both counselor and client roles through interactions with chatbots

· To develop academic writing skills

Figure 1. Overview of the learning process in the elective course.

In the first teaching session, we introduced GAI and ways to interact with the chatbot as a supplementary feedback partner in three different ways inspired by the prompting technique “the persona pattern" (White et al., 2023). Specifically, we outlined and introduced the following three chatbot personas:

1. Practice partner through a fictional role-play qualifying the counselor role: Students can use the chatbot as a practice partner, acting either as counselor or counselee. The chatbot can also advise students on how to apply different approaches to counseling.

2. Coaching partner before producing video footage of the counseling conversation.

3. Feedback provider on academic texts: Chatbots can act as a critical co-reader and give feedback on students’ texts in terms of whether they present structured and coherent argumentation.

Students worked with the version(s) of ChatGPT they had access to, either in the form of free access (ChatGPT 3.5) or a subscription they purchased themselves (ChatGPT4). When the course was offered, Aarhus University did not provide CoPilot or an alternative platform to access GAI yet. Interacting with the most-used chatbot at the time, therefore, gave students similar experiences to interacting with chatbots as their potential clients. We introduced GAI and the use of chatbots in a classroom video presentation and an online written introduction to GAI – including how to interact and create prompts with regard to the three personas. Students were told not to share sensitive personal information with chatbots.

A sociocultural perspective on learning

From a sociocultural perspective, meaning-making and learning are not individual cognitive phenomena, but deeply rooted in a collaborative and sometimes tension-filled dialogue with “relevant others” (Carless & Young, 2024). Our theoretical foundation is the Russian literary theorist Bakhtin’s (1981) dialogical understanding of meaning-making, in which language use and learning are situated within specific historical, cultural, and social contexts. According to Bakhtin, we engage in dialogue whenever we express and interact with different experiences and opinions. Moreover, dialogue is ongoing and unfinazable (Frank, 2005); what we express is permeated by traces of meaning from previous interactions and oriented toward future ones (Bakhtin, 1986, p. 91). Bakhtin refers to these different opinions and experiences as voices. In a Bakhtinian sense, voice does not refer to a physical voice but is seen as the expression of a (value) position, which can be associated with or refer to different discourses, forms of knowledge, or linguistic expressions (Bakhtin, 1981; Nordentoft & Olesen, 2014). Moreover, the interaction between different voices in a dialogue takes place in two constant and competing opening and closing movements or tendencies (Bakhtin, 1981; Nordentoft & Olesen, 2014): 1. a centripetal tendency towards unity, and 2. a centrifugal and expanding tendency towards dialogue and diversity. The seed of meaning-making and learning, according to Bakhtin, lies in the tension(s) arising between different voices and tendencies in a dialogue. In the context of our study, chatbots can be viewed as a source of new and potentially disruptive voices in students' feedback dialogues (Clark, 2024). In the present study, we explore how students respond to feedback from an artificial intelligence in their learning process and how they mediate possible tensions between different voices and tendencies.

Method

To capture students' responses to GAI, we have applied an exploratory and processual research design, collecting both qualitative and quantitative data. All students provided informed consent and are anonymized in the article. In this study, we draw on two kinds of data:

· The quantitative data consists of a pre- and post—intervention question from an online survey (table 1). The survey maps differences in the students' emotional response to GAI before and after interacting with the chatbot in the formative feedback process. A link to the anonymous online survey was sent to students before starting and after completing the course, with 16 students answering the pre-survey question and 12 students answering the post-survey question.

· The qualitative data consists of smartphone recordings of 15 students’ reflections in their peer groups during the two Collective Academic Supervision (CAS) sessions and midterm evaluation. Data comprises a total of 9 group dialogues (49 minutes). Table 2 shows the questions we asked students to 1) reflect on without the presence of the researchers and record on a smartphone and 2) upload their reflections in a GDPR-compliant online folder. We have applied this methodological approach in previous studies and found it to be effective in creating a more spontaneous and relaxed atmosphere in which students seemed to feel at ease and open in expressing their experiences and opinions (Jensen & Nordentoft, 2022; Nordentoft et al., 2020).

Table 1. Pre- and post-survey: Question on students’ emotional response to chatbots.

|

Question |

Response options |

|

Which of the following describes your feelings and attitudes toward artificial intelligence like ChatGPT or other chatbots?

It is possible to select multiple answers. |

a. Excitement b. Acceptance c. Optimism d. Hope e. Skepticism f. Unnecessary g. Confusing h. Anxiety |

Table 2. Feedback from chatbot: Questions for the students’ oral reflections on their interactions with chatbots.

|

Questions in the two Collective Academic Supervisions |

What has it been like to work with chatbots as a feedback provider on your text drafts? How and when have you used it? Has it helped you in your writing process? How? What have you found unusual or perhaps strange in the feedback that chatbots have given you, and what have you chosen not to use? Have you gained anything from the process of formulating questions for chatbots, even when you chose not to use their answers? Do you have any comments on the introduction and guidance you have received for working with chatbots? What has worked well/could be improved? Do you have any comments on the introduction and guidance you received for working with chatbots? What has worked well/could be improved? |

|

Questions in mid-term evaluation |

How has it been being a student in the elective course so far? What are your two most important counselling-specific and academic insights from the course, and how did you come to each of them? How do you experience the relevance of working with AI in the elective course? Have any of your attitudes/feelings changed compared to the answers you gave in the initial survey? If so, why? Is there anything else you would like to say or comment on? We'd like to hear it. |

Collaborative thematic analysis

We chose thematic analysis as an inductive approach to the analysis due to its flexibility and focus on identifying, analyzing, and reporting patterns (themes) within data. This approach was well-suited for an exploratory study. Inspired by Braun & Clarke (Braun & Clarke, 2006, 2019), we conducted a reflexive six-phase approach to thematic analysis. During this process, the first author, who was also the course instructor and supervisor, navigated between three different roles: supervisor, evaluator, and researcher. The roles of supervisor and evaluator can be experienced as opposing positions, which increases the complexity of interactions with students. When adding the role of researcher to this mix, it becomes necessary to consider how this shifting array of positions can be managed within a research process (Berger, 2015; Macbeth, 2001). To work with this challenge, three methodological steps were taken: Firstly, the researcher was not present when the students were reflecting on their interactions with the chatbot. Secondly, she emphasized that their reflections would have no impact on the final evaluation. Finally, she collaborated closely with the second author (and guest speaker at the course) throughout the dynamic and emerging project (Braun & Clarke, 2021).

Audio reflections were transcribed using the Whisper transcription app in UCloud, developed at Aalborg University. This tool, which uses OpenAI's Whisper language model, operates in a closed cloud environment, ensuring secure handling of potentially sensitive data. Prior to transcription, both researchers listened to all audio reflections. All transcriptions were reviewed for errors. The first phase of analysis involved familiarization with the data, where both researchers independently read the transcripts multiple times. During this and subsequent phases, the researchers revisited the original audio recordings to relisten to selected passages, ensuring a deep understanding of the data. Informed by Bakhtin's understanding of the relationship between dialogue and learning, particular attention was given to the tensions arising between different voices and opening or closing tendencies between centrifugal and centripetal movements in students’ meaning-making and learning. Initial codes (understood as labels) were then generated systematically across the dataset, with the researchers comparing and discussing their coding process. Working together, these codes were collated into potential themes, which were subsequently reviewed and refined through joint analysis sessions. Definitions and names were developed for each theme through researcher consensus. This collaborative approach to analysis was employed to foster a more comprehensive understanding of the data, allowing ongoing dialogue and negotiation of meanings throughout the process.

In our thematic development, we were attentive to the different value positions, discourses, forms of knowledge, and linguistic expressions (Phillips, 2011) that emerged in the students' reflections on their interactions with chatbots. This Bakhtinian perspective enabled us to explore how students navigate and make meaning from the various “voices” present in their learning experiences with chatbots. Finally, compelling examples were selected, and the analysis was related back to the research question and existing literature within the field. Throughout this iterative process, memo writing (Charmaz, 2014) was employed to document emerging ideas, potential connections, and analytical decisions, enhancing reflexivity and deepening the analysis. Thus, memo writing was an integral and iterative part of the thematic analysis process (Morgan & Nica, 2020).

Limitations and ethical perspectives

The dataset does not provide direct observation of chatbot usage. However, we contend that conversations between peers offer valuable insights into students' use and understanding of chatbots in the context of feedback during a counseling course. Our approach illuminates both students’ interpersonal communication about the topic and their individual perceptions, as well as the strategies they develop to navigate perceived tensions. A potential constraint of this methodology is the possibility of self-censorship. Students may be reluctant to fully disclose their use of chatbots for fear of being judged by their peers or potential negative impacts on teacher perceptions. Additionally, the chosen research design precludes immediate follow-up questions or requests for elaboration of students’ reflections. Future studies could address this limitation by complementing reflective dialogues with post-course individual interviews. The gender imbalance among participating students could be viewed as a potential source of bias. However, the predominance of women in the research sample can be considered representative of the typical student demographic for students attending the course at the Department of Educational Science in terms of gender and age. While this study does not explore the relationship between gender and chatbot use, such a focus could prove a fruitful avenue for future research, as studies indicate that gender seems to be a factor in GAI use (Stöhr & Malmström, 2024).

Despite these limitations, we argue that the chosen methodology provides a unique window into students' thought processes and experiences with chatbots in an educational context. It allows us to capture authentic peer reflections, which are well suited to provide a nuanced understanding of how students respond to chatbot voices in formative feedback.

Analytical findings

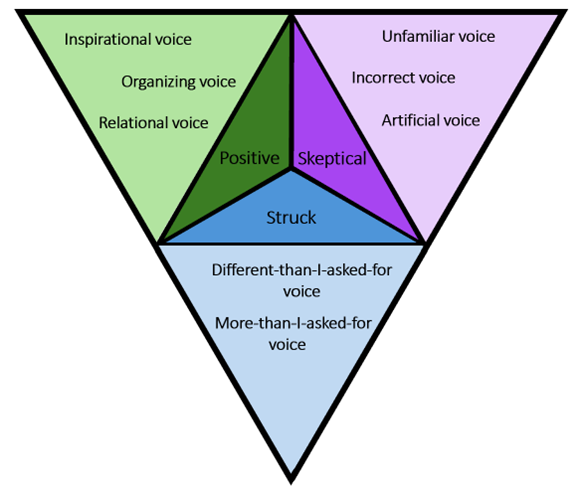

In the following analysis, we explore how students engage with and respond to chatbots in formative feedback processes. Drawing on their reflections (see table 2 for reflective questions), we identify three distinct responses: Positive, skeptical, and struck that characterize students' interactions with GAI. Within each response, we examine multiple chatbot voices and how these voices appear in the context of formative feedback. Moreover, these voices can be viewed as different ways in which chatbots contribute to students' learning processes. In our analysis, we examine how interacting with chatbots shapes students’ understanding of what it means to be critical in an academic context, and how this understanding influences which chatbot voices they accept, reject, or integrate within their learning. Finally, we analyze the pre- and post-intervention survey data to track shifting emotional responses to GAI throughout the course duration.

Chatbot voices in formative feedback

The students alternated between different responses when talking about the chatbots. Sometimes they eagerly explored the new possibilities that chatbots can offer for their studies; at other times they responded with caution and reluctance. They also sometimes responded with a sense of being struck by the chatbots’ potential - a potential they sometimes found to be somewhat scary. We have identified three overall student responses to chatbots with corresponding voices showing how students use and discuss chatbots in formative feedback processes, as illustrated in figure 2:

Figure 2. The three overall responses and the voice associated with each response.

A positive response

In a positive response, the potential of the chatbot is embraced and acknowledged, influenced by centrifugal and opening tendencies. For example, students noted that it provides a unique opportunity to practice counseling before facing real-world situations, with the chatbot described as a “coaching partner” or a “study buddy” that helps them to plan a counseling session both in theory and in practice (see table 3 for an overview of students’ descriptions of the chatbot).

Table 3. Students’ description of the chatbot.

|

Automatic A bit like Wikipedia A computer Mechanical/A machine An advisor Artificial human Extended form of Google Study buddy |

Search engine Sparring partner Tool A reference book Not a solution generator A proofreader The cheating path A source of inspiration |

The students expressed that chatbots can help them during the writing process in formulating and articulating their thoughts and assist them in organizing and gaining an overview of these thoughts. One student described it as follows:

“I feel that when things have become a bit chaotic for me, when I’ve had so many [different] thoughts, it has helped me to write them into the chatbot. I don’t feel like I get a finished product in return, but I feel that I get help in putting my thoughts into words and better guidelines for where I actually want to go, so I can specify it myself.”

Students also experienced what it feels like to be counseled — not only to act as a counselor — in their interactions with the chatbot. Something the student below clearly had not considered:

”I think it’s been, well, I don’t know if — I haven’t really thought about being in the position of the one being counseled. No — and being the student when I create those prompts.”

Another student, who described herself as quite introverted, discovered that a chatbot is well-suited for "someone like her”:

“I am not comfortable asking all sorts of questions during class. Or not uncomfortable. I just don’t feel like it. I prefer to sit and think about it and work through it on my own. And then... you know, sort of sidestep many times. And then ask, ‘Is this how I’ve understood it?’ And I think the chatbot provides an opportunity for those of us who are a bit more... or for me, who is a bit more... um... introverted. And... introverted in a classroom setting. And I find that to be really nice because I don’t necessarily feel that I need to be forced to be outgoing in a classroom situation.”

Overall, in a positive response, chatbots facilitated the emergence of three new voices that enhanced students' engagement with the work on their assignment:

· An inspirational voice: offering theoretical and methodological suggestions regarding how to structure a counseling session.

· An organizing voice: helping to create coherence and structure their thoughts and text.

· A relational voice: allowing students to shift perspectives and gain insight into both counselor and client positions. Positioning the chatbot in this voice offered introverted students a new space for interaction, which they experienced as safe, potentially building their confidence to participate more actively in group settings.

A skeptical reponse

In a skeptical response, students exhibited resistance to working with chatbots for several reasons. Firstly, a chatbot represented new and unfamiliar voices in their learning process, with the students’ reflections in this category influenced by centripetal tendencies, shutting the dialogue down by rejecting the feedback from the chatbot. Many students were not used to working with artificial intelligence, as the exchange below illustrates:

“A: It's new for me. So, I haven’t used it because I’m very concerned about... What do you call it? The thing about copying, or what’s it called? Plagiarism. Um. I mean, using myself more as a reflective tool. It could be a word, for example. And then I might ask, what does that word mean in that context, for instance. Yeah. So that’s how I use it. But I wouldn’t think of using it for... the assignment itself. No. Um. I guess I come from the old school. Um. Where I think it’s more natural to..."

B: Yeah, to think for oneself. Yes.

A: Yes. And figuring things out on your own. Yes. But maybe more as a reference tool. Yes. But it’s actually a pretty good way to think about it.”

The students seemed uncertain about how chatbots might effectively become a partner in an academic context. They feared being accused of plagiarism (they understood what plagiarism is but struggled to articulate it) and that the chatbot could disrupt a fundamental academic premise from "the old school" that emphasizes independent thought. For instance, the student in the quote above says that she uses herself - and thereby implying not the chatbot – as a “reflective tool”. They appeared to have a simplistic understanding of plagiarism and feared that if/when they used the chatbot in their written work, they would be accused of plagiarism. The apparent solution was to use chatbots as a sort of "reference tool," akin to a "Google search engine" and ask it to define specific words. However, this introduces a new problem: Could they trust the responses they got from the chatbot? Two students discussed this problem:

“A: But when you’ve asked it to elaborate or explain a concept, have you always felt that it was reliable? Or...?

B: Um, yes, I think I use my common sense. Yes. And I also think that, at the same time, I, for example, use articles or, you know, delve deeper. I wouldn’t just trust it 100%.

A: No.

B: And then say, 'Oh yes, that sounds like a good idea.' I’d want something to back it up. Evidence for it. Um, I don’t know if that makes sense, but if I’ve read a text or something, then I can see the connection. I wouldn’t just... Oh, I’ll just do that. No. Now you ask it, and then I think, where is it? And then I can only cross my fingers that it’s the right thing."

This exchange illustrates a paradoxical relationship. On one hand, the students did not fully trust the chatbot or view it as a credible voice that can be granted legitimacy in an academic context. On the other hand, they used it as a form of authority check to back up what they had written. In a skeptical response, unlike in a positive response, students find it challenging to interact with a machine:

“A: It becomes very automated. Yes, exactly. I think I have trouble translating it into a chatbot because it feels so mechanical. It is so mechanical; I can’t connect it with the relational aspect.

B: No.

A: I think that's where I get stuck, so I would have difficulty responding to it. Yes. I can’t see it engaging with me relationally.

B: No. No, because it is, after all, a machine.

A bit later, A adds:

A: And I also think that regarding the discussions we've had about whether children and young people will use this more... I have my doubts, because it feels a bit... You get a strong sense that I’ve created something artificial.”

The chatbot’s voice is experienced as "artificial," and interacting with a machine that is not a living person — yet is expected to act like one — feels inhuman.

In a skeptical response, students were critical of the academic credibility of chatbots. They were aware of the importance of being critical in an academic context and seemed to expect the chatbot to provide this critical perspective in formative feedback. They described the chatbot as being overly positive, without reflecting on the implication of their own critical stance or ways of interacting with the chatbot:

“But this thing about professional counselling competence – I care a lot about that. Being critical. And I don’t really think it has... um... been able to help me all that much with that. So it's been good again for all the positive things. Like saying... That you're actively listening. And that you're supportive in a counselling situation –But… What I really need more is to know what I’m not doing.. um...”

When students dismissed answers from the chatbot, they described the rejected response as "a bowl of cherries" and “all cake and no substance". In other words, they experienced the chatbot merely "telling them what they want to hear" and thought that it did not provide the critical feedback they needed:

“It's difficult to get it to provide a critical perspective on my work, which is probably what I need. I find it to be very polite and... yes, very positive. Naturally, because it knows a lot about the subject area”

This created a paradoxical situation where students expected to receive a critical perspective from the chatbot without actively contributing to such a perspective, even though they were aware that they could have "put more effort into prompting it to be a bit more critical”, as one student put it. Some students assumed that the chatbot remembers everything you input into the large language model and that it functions like Wikipedia. The following exchange is an example of how three students responded to and negotiated the potential uses of the feedback they received:

“A: What have you found unusual or perhaps strange in the feedback that chatbots have given you, and what have you chosen not to use? [reads a reflective question, see table 2]

B: Well, it really depends on what you're using it for. If it’s very theoretical or factual, then I just go ahead and use it.

C: But should we imagine that if you upload your entire assignment, it stays in its memory forever?

B: Yeah.

A: So I could say, 'Once, another student wrote this for someone else'.

B: Yeah.

C: Okay.

B: So, it's kind of like...

B: I also often see it as... And that's why you have to be critical of what it writes because it's kind of like Wikipedia.

C: I mean, anyone can go in and tell it that something isn’t right.”

Likewise, when the chatbot provided different answers to the same question, this clearly confused students and created a tension as to whether or not they could trust the chatbot voice. They were therefore skeptical and critical of whether the answer they received was "correct." Their understanding of "being critical" evidently implies not believing everything the chatbot returns. However, because of the students' limited knowledge of GAI, they made erroneous assumptions about how it functions, comparing it to e.g. Wikipedia or search engines.

Overall, three new voices become apparent in the skeptical response to the chatbot's relevance in an academic context:

· An unfamiliar voice: maintaining a distance from this new voice due to its novelty and uncertainty about its legitimacy.

· An incorrect voice: uncertainity leading to dismissal of the chatbot voice as not being academic or credible.

· An artificial voice: finding it challenging to interact with a machine, experiencing the new voice as artificial and out of place in a counseling context that focuses on human interaction.

A struck response

The final position is characterized by students’ different degrees of struckness in their response to what the chatbot delivers during formative feedback. Cunliffe describes struckness as embodied and potentially associated with an “unsettling” experience (Cunliffe, 2002, p. 36). It is a condition where one suddenly becomes aware of something that challenges existing understandings or perspectives, resulting in sensations of discomfort or feeling overwhelmed. This response to chatbot voices is marked by competing opening and closing movements or tendencies: a centripetal tendency towards unity and an expanding and centrifugal tendency towards dialogue and diversity. For instance, a centrifugal tendency can be identified when a student provided an example where the chatbot gave a more detailed answer to a question than she had initially asked for regarding her work on a systemic approach to a specific counseling session she intended to use in her assignment:

”A: So I asked it to categorize it into these four circular and linear divisions in Tomm’s model for systemic questions. And what it did, which I hadn’t asked for, was that it also described what linear thinking is and what circular thinking is. It included a little sentence about what each of them is.

B: Yes.

A: And I hadn’t asked for that.

B: No.

A: I had just asked it to categorize the questions. But I think I was actually inspired by that.”

The student here describes how the chatbot not only answered the specific question she had asked about placing questions in certain categories but also elaborated on the thinking behind the different categories. This seemed to surprise her. The adverb "actually" indicates that it was not something she had expected.

“A: I mean, I think I’m quite new to this whole chatbot thing. I actually find it quite interesting. The longer I get to work with it, the more interesting it becomes. And I also think it offers some good suggestions and points out things I might not have considered myself […] And it's not as straightforward as I had thought. I think that's great.

B: Yes.

C: And it’s exactly that — it takes you further out than you would go on your own. It helps you think outside the box a bit.

A: It lifts you out of your own box a little.”

As such, the chatbot can facilitate that "one starts to think a bit further." However, as another student pointed out, you can also be "a bit seduced by the comments it makes." Some students also found it somewhat uncomfortable when the chatbot of its own accord continued to add details to the cases they were working on in role-playing exercises. For example, one student described how the chatbot continued to elaborate on her case and the client:

“A: ‘Judy had two children and worked,’ and it went into details about all sorts of things.

B: Oh.

A: Well, I hadn’t mentioned that in the case.

B: No, okay.

C: Wow, that’s crazy

A: I think, in a way, it starts to... It’s a bit uncomfortable.”

Some students found that the chatbot was not only a constructive resource in their writing process but also a bit "scary":

”It has been fun and helpful. It's amazing that you can have a longer conversation with the chatbot about all sorts of things, but it's also a bit scary, so I feel that you have to be extremely critical of what it writes.”

Some students demonstrated an awareness of the reflexive connection between questions and answers when pointing out that the academic input they received from the chatbot depended on their prompts. As one student put it:

“So, there’s a lot it doesn’t know. And there’s also a lot of responsibility in giving it the correct prompts. It doesn’t reduce the effort required. And I actually like that about it. It still requires me to do my groundwork.”

In addition, some students discovered a connection between how they interacted with a chatbot and how they interact with clients as counselors. In the interaction sequence below two students display an awareness of how questions shape the direction and possibilities of any counseling session:

”A: You can't just get one answer and then move on. It’s also important to figure out that you shouldn't give up if you don’t get the answer you want. You should rephrase it or ask, ‘Can you be more specific?’ or ‘Can you elaborate in a different way?’

B: But I also realize that I need to learn to prompt more clearly.

A: Yes.

B: And that's actually quite good because it also helps me learn to ask clearer questions as a counselor.

A: So the challenge is that if you haven’t written a specific and well-defined question in relation to what’s relevant for your assignment, it can give you a lot of general knowledge, concepts, and theories, and you’ll have to navigate through it yourself.

B: Yes.

A: So it’s very much about being as precise as possible.

B: Exactly.”

In summary, interacting with a chatbot during the counselling course sparked reflections on the dynamic relationship between questions and answers that are key to counselling education.

As shown above, in the struck response, two voices emerge in students’ responses to their interactions with chatbots while working on their assignments:

· A more-than-I-asked-for voice: where students experience receiving more than requested. This can be both useful and frightening.

· A different-than-I-asked-for voice: where students receive something other than they had expected, which can "lift you out of your box."

To summarize, in the analysis, we have identified three types of student responses to chatbots during formative feedback processes: positive, skeptical, and struck responses. Moreover, the findings highlight tensions between the ways in which students perceive chatbot voices as both rewarding and problematic in an academic context. There appears to be a competing alternation between centripetal and centrifugal tendencies—i.e. between opening up for new voices and shutting down dialogue and dismissing such voices. When struckness occurs, skepticism potentially transforms into openness and playfulness during interactions with the chatbot. These varying perspectives shift between viewing the chatbot as personal and inspirational, and perceiving it as unfamiliar, potentially incorrect, and artificial. The element of being struck tends to highlight students' initial skepticism, especially when the chatbot offers more than they expected or provides something unexpected – i.e. like a machine behaving in an almost human manner by delivering detailed insights into specific cases that they are working on. While this can facilitate dialogue, create centrifugal tendencies, and be rewarding, it can also feel somewhat "scary" and shut the dialogue down — i.e. initiate centripetal tendencies.

In accordance with previous studies conducted within the framework of the counseling course, the process of giving and receiving feedback seems to be emotionally charged and relationally complex (Nordentoft & Møller, 2020, 2022a). The introduction of chatbots does not reduce this complexity. The analysis of the students’ reflections clearly shows that emotions play an important role in shaping their interactions with the chatbot in the context of formative feedback. Drawing on previous research and to trace the potential link between feedback and emotion in the context of GAI, we therefore included a question specifically on students’ emotional response to chatbots in an online pre- and post-intervention survey. In the following, we present the results of these surveys.

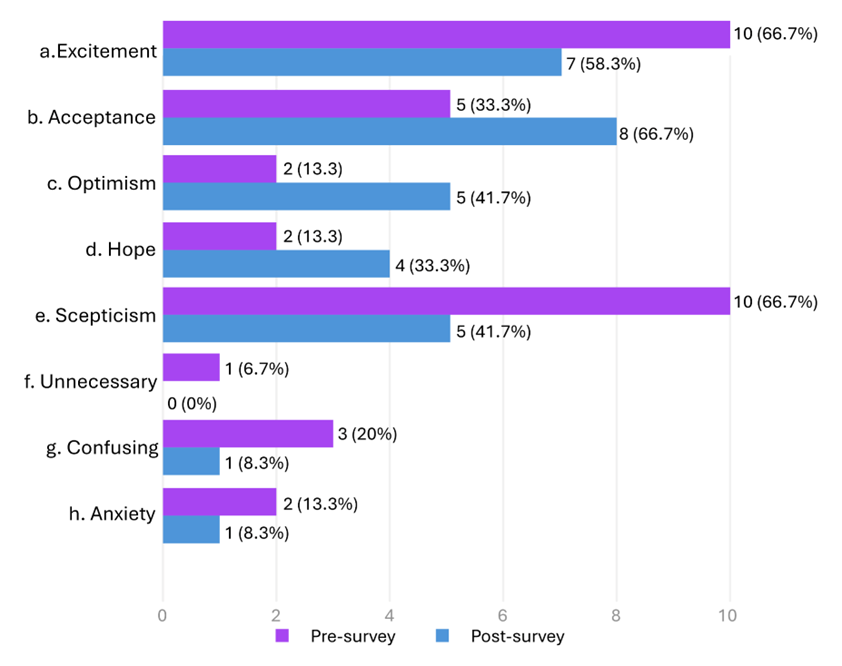

Emotional responses prior to and after the introduction of GAI

In the pre- and post-intervention surveys, we asked students to respond to the question “Which of the following describes your feelings and attitudes toward artificial intelligence like ChatGPT or other chatbots? It is possible to select multiple answers." Figure 3 shows their answers and how the students' emotional responses to GAI changed throughout the course. From a cohort of 16 students who completed the pre-survey and 12 who completed the post-survey, we observed several shifts in attitudes. More students expressed optimism concerning the use of chatbots after the course (5 students) compared to before (2 students). Interestingly, while skepticism and excitement were initially the most common responses (10 students each), fewer students reported skepticism after the course (5 students). Given that participants could select multiple emotions in both surveys, these changes likely reflect evolving attitudes as students gained experience with GAI tools, rather than simply exchanging one emotion for another. Overall, there was an apparent decrease in negative emotional responses to GAI, with fewer students giving responses such as confusion, anxiety, and a belief that AI was “unnecessary”. This decrease in negative responses, alongside increases in optimism and acceptance, suggests that as students gained practical experience using GAI tools during the course, their perspectives likely became more nuanced. While these findings provide valuable insights into how emotions toward GAI may evolve through structured educational experiences, we present them as exploratory observations from a small sample rather than statistically significant results.

Figure 3. Pre- and post-survey: Question on students’ emotional response to chatbots.

The emotional shift between students’ responses in the pre-survey and post-survey data is also evident in the qualitative data. However, as described above, the qualitative data also reveals how students´ emotional journey seemed to be marked by ambivalence and shifting moods in the formative feedback process when navigating the uncertain nature of GAI. This uncertainty can be said to mirror counseling practices, where counselors must deal with the various situated and complex needs of clients, develop reflective practices, and be able to engage with multiple perspectives (Maurya, 2023).

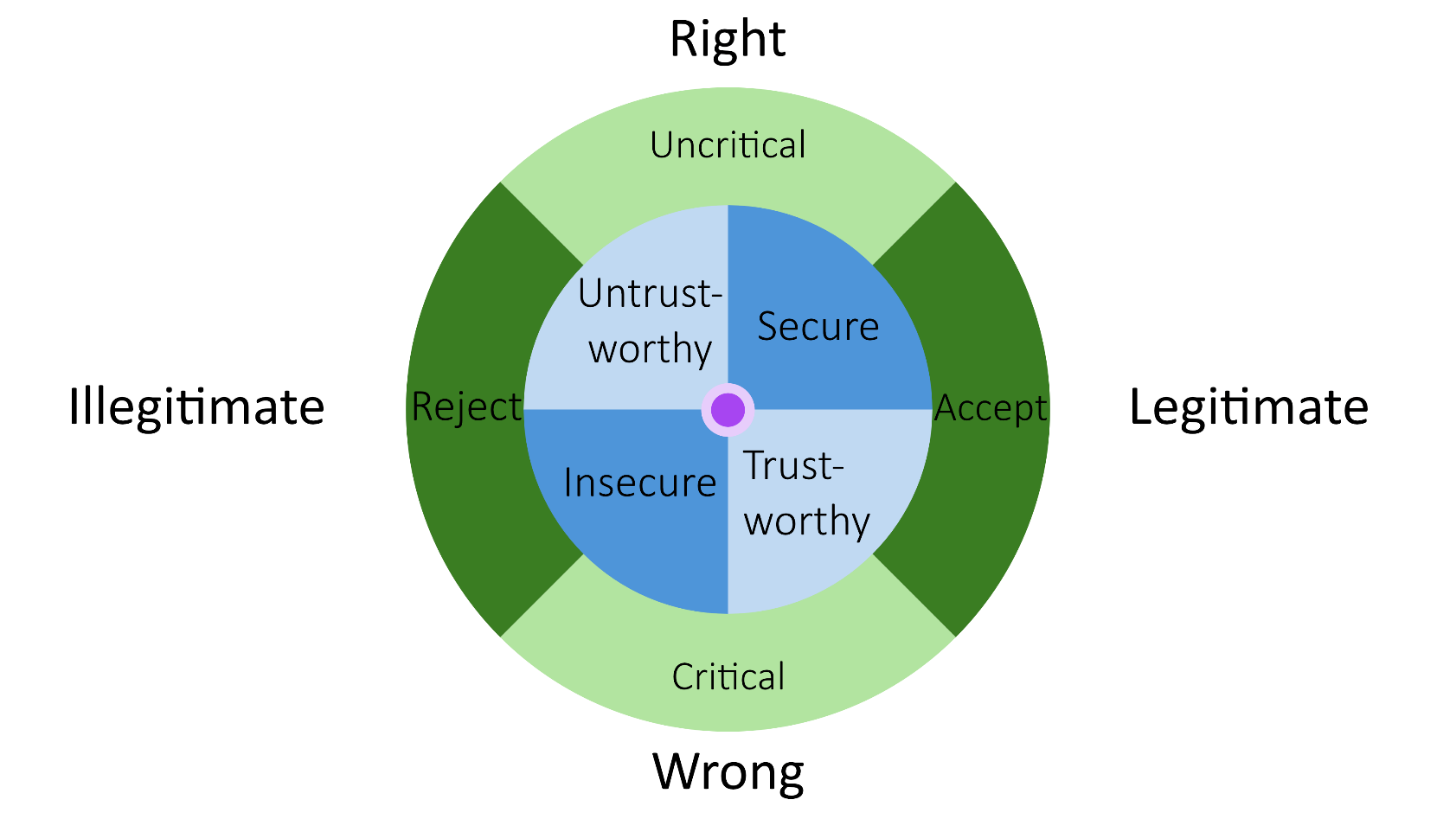

To systematize our analytical insights and better understand the emotional dynamics at play, we have developed “the emotional ambivalence compass”— a model that captures the field of tension between the different voices emerging in students’ responses to the significance of GAI for both their academic work and future counseling practice (figure 4).

Capturing analytical insights: the emotional ambivalence model

By using the metaphor of a compass, “the emotional ambivalence compass” evokes an image of students’ continuous and dynamic navigation through complex and often uncharted and uncertain territory. The compass consists of three rings that are reflexively interconnected, existing in synergy and tension with one another. The outer ring contains four poles: On one axis, the chatbot's voice is seen as either right or wrong. Negotiating these poles is closely connected to the other axis where it is considered whether or not it is perceived as legitimate to integrate GAI into academic work. For instance, one student mentioned feeling somewhat "old-fashioned" and thus reluctant to use GAI in her assignment, fearing potential accusations of plagiarism (Jensen, 2024). This introduces consideration of research ethics regarding good academic practice and valid knowledge in an academic context (Jensen et al., 2024; O’Donovan, 2017). Conversely, other students did not share these reservations and were surprised by how constructive and inspiring the chatbot's feedback could be. One student, for instance, remarked that it "makes her think more deeply." The tension between the poles in the outer ring influenced how students approached and negotiated the chatbot's feedback, as illustrated by the middle ring depicting whether they responded critically or uncritically, and whether they accepted or rejected its voice — some accepting but with certain conditions, viewing it as a reference tool akin to a search engine.

The poles in the two outer rings give rise to — or stem from — conflicting emotions, as highlighted in the inner ring, such as feeling secure or insecure in interactions with the chatbot, and/or feeling that chatbot voices are either trustworthy or untrustworthy — emotions indicating whether students felt close to and understood by, or alienated by the "machine voice" that the chatbot represents. For example, we observed how an introverted student experienced a sense of intimacy and understanding in her dialogue with the chatbot, making her feel more secure and less hesitant in asking questions. Other students also experienced meaningful interactions, not only when being counseled by the chatbot, but also gaining new insights when acting as a counselor, with the chatbot playing the client role. Conversely, some students felt alienated and uncertain due to the chatbot's responses, which often included unsolicited and overly detailed elaborations on specific scenarios.

The compass illustrates not only the emotional fields of tension that students navigate but also the ethical tensions between striving to do good, to do what is right in an academic context, and to feel understood — and their opposites — encompassing both research ethics and practical counseling discourses. According to Cunliffe (2004), students' emotional responses can act as a catalyst for deeper critical reflexivity and learning, whether they are feeling struck, surprised, or experience a sudden strong resentment. This learning process involves a more thorough reflexive exploration of connections and possible tensions between questions, prompts, and the answers students are given by the chatbot. Investigating emotional responses in this process can highlight one's assumptions, values, and actions.

Figure 4. The emotional ambivalence compass.

Applying this line of thinking to students’ responses to the chatbot’s voice during formative feedback processes raises significant questions concerning professional identity and self-understanding. These negotiations of the significance of chatbot voices therefore have the potential to foster active inquiry and reflection, rather than merely reproducing the fixed norms and categories of counseling as a professional field. At the same time, these negotiations provide an arena for cultivating the ability to handle complexity, emphasize the importance of context, and navigate multiple voices — all of which are essential counseling and academic competencies that shape students into critical thinkers rather than merely moral professionals (Cunliffe, 2004).

Conclusion

In the fall of 2023, we introduced generative artificial intelligence (GAI) in formative feedback processes as part of the master's degree course "Counseling as Pedagogical and Institutional Practice" at Aarhus University. Throughout the course, students were presented with opportunities to interact with chatbots in different roles and were invited to reflect on their experiences through dialogue with peers. In this longitudinal mixed-methods study, we investigated how students responded to the introduction of chatbots during formative feedback processes.

Following Bakhtin, we understand learning as emerging from the tension between different voices in a dialogue, where "voice" represents an expression of a certain (value) position—whether theoretical, methodological, or experiential. By introducing chatbots as potential "relevant others" as part of the formative feedback process (Carless & Young, 2024), we analyzed how students respond to chatbot voices during this process. Our analytical findings revealed that their response is complex, dynamic, and emotionally charged. We identified three responses that students oscillate between: a positive response characterized by openness and centrifugal tendencies; a skeptical response marked by resistance and centripetal tendencies; and a struck response where students experience both opening and closing movements simultaneously. The pre- and post-survey data indicate that the course led to an overall decrease in negative emotional responses to GAI and an increase in positive emotions. This might suggest that the students discovered an unanticipated educational potential in chatbot interactions.

A significant finding was the emotional ambivalence in students' responses. They expressed enthusiasm and excitement about the possibilities of chatbots one moment; the next moment, they questioned the legitimacy of chatbot voices in an academic context. By introducing "the emotional ambivalence compass," we have conceptualized this field of tension as potentially productive in terms of students' formative feedback and learning processes. The compass illustrates how students navigate between seeing chatbot responses as right or wrong, and as legitimate or illegitimate in academic work, while simultaneously negotiating whether to accept or reject these voices based on their understanding of what it means to be critical. The tensions, therefore, surface between seeing chatbot voices as inspirational, organizing and relational (in positive responses); as unfamiliar, incorrect, and artificial (in skeptical responses); or as providing something "more than asked for" or "different than expected" (in struck responses). Significantly, when struckness occurs, skepticism can potentially transform into openness and playfulness, creating opportunities for deeper engagement with chatbot feedback.

In conclusion, we have argued that GAI's learning potential can only be realized when these emotional fields of tension are acknowledged and articulated in academic work and feedback processes. Therefore, we invite teachers and supervisors to use the emotional ambivalence compass in collaborative and critical reflexive dialogues with students about their experiences with GAI. Providing dedicated space within courses to collectively negotiate the relevance of chatbot interactions within a subject-didactic framework can support the development of students' critical reflexivity and, consequently, enhance their professional and academic competencies in higher education. By embracing rather than avoiding the emotional ambivalence that characterizes students’ encounters with chatbots, educators can leverage these tensions as new, productive spaces for meaning-making and learning.

References

Braun, V., & Clarke, V. (2019). Reflecting on reflexive thematic analysis. Qualitative research in sport, exercise and health, 11(4), 589-597. https://doi.org/10.1080/2159676X.2019.1628806

Braun, V., & Clarke, V. (2021). One size fits all? What counts as quality practice in (reflexive) thematic analysis? Qualitative Research in Psychology, 18(3), 328-352. https://doi.org/10.1080/14780887.2020.1769238

Bruun, M. H., Krause-Jensen, J., & Hasse, C. (2024). Store sprogmodeller og AI-chatbots på videregående uddannelser. Pædagogisk Indblik, 26. https://unipress.dk/media/21032/26_store_sprogmodeller_og_ai-chatbots_paa_videregaaende_uddannelser_-11-12-2024.pdf

Carless, D., & Young, S. (2024). Feedback seeking and student reflective feedback literacy: a sociocultural discourse analysis. Higher Education, 88, 857–873. https://doi.org/https://doi.org/10.1007/s10734-023-01146-1

Charmaz, K. (2014). Constructing grounded theory. Sage.

Cunliffe, A. L. (2004). On becoming a critically reflexive practitioner. Journal of Management Education, 28(4), 407-426. https://doi.org/https://doi-org.ez.statsbiblioteket.dk/10.1177/1052562904264

de Kleijn, R. A. M. (2023). Supporting student and teacher feedback literacy: an instructional model for student feedback processes. Assessment & Evaluation in Higher Education, 48(2), 186-200.

Escalante, J., Pack, A., & Barrett, A. (2023). AI-generated feedback on writing: insights into efficacy and ENL student preference. International Journal of Educational Technology in Higher Education, 20(1), 57. https://doi.org/10.1186/s41239-023-00425-2

Frank, A. (2005). What is dialogical research and why should we do it? Qualitative Health Research, 15(7), 964–974. https://journals-sagepub-com.ez.statsbiblioteket.dk/doi/epdf/10.1177/1049732305279078

Jensen, A. S., Bach, K. M., Nordentoft, H. M., Møller, K. L., & Mariager-Anderson, K. (2024). Hvorfor deltager de ikke? Diskursive positioner i førsteårsstuderendes møde med peerfeedback (Why don't they participate? Discursive Positions in first-year Students' encounter with Peer Feedback). Dansk Universitetspædagogisk Tidsskrift, 19(36). https://doi.org/10.7146/dut.v19i36.140456

Jensen, T. W. (2024). Når kunstig intelligens bliver en del af vejledningsrummet (When Artificial Intelligence becomes part of the Supervision Context. Dansk Universitetspædagogisk Tidsskrift, 19(36). https://doi.org/10.7146/dut.v19i36.140339

Jensen, T. W., & Nordentoft, H. M. (2022). Academic Writing Development of Master’s Thesis Pair Writers: Negotiating Writing Identities and Strategies. Journal of Academic Writing, 12(1), 50-67. https://doi.org/10.18552/joaw.v12i1.840

Lai, C. Y., Cheung, K. Y., & Chee Seng, C. (2023). Exploring the role of intrinsic motivation in ChatGPT adoption to support active learning: An extension of the technology acceptance model. Computers and education. Artificial intelligence, 5, 100178. https://doi.org/10.1016/j.caeai.2023.100178

Macbeth, D. (2001). On “reflexivity” in qualitative research: Two readings, and a third. Qualitative Inquiry, 7(1), 35-68.

Maurya, R. K. (2023). Using AI Based Chatbot ChatGPT for Practicing Counseling Skills Through Role-Play. Journal of Creativity in Mental Health, 1-16. https://doi.org/10.1080/15401383.2023.2297857

Maurya, R. K. (2024). A qualitative content analysis of ChatGPT's client simulation role‐play for practising counselling skills. Counselling and psychotherapy research, 24(2), 614-630. https://doi.org/10.1002/capr.12699

Maurya, R. K., & DeDiego, A. C. (2023). Artificial intelligence integration in counsellor education and supervision: A roadmap for future directions and research inquiries. Counselling and psychotherapy research. https://doi.org/10.1002/capr.12727

McGrath, C., Farazouli, A., & Cerratto-Pargman, T. (2024). Generative AI chatbots in higher education: a review of an emerging research area. Higher Education. https://doi.org/10.1007/s10734-024-01288-w

McLeod, J. (2013). Theory in counselling: using conceptual tools to facilitate understanding and guide action. In J. McLeod (Ed.), An Introduction to Counselling (pp. 57-80). Open University Press.

Molloy, E., Boud, D., & Henderson, M. (2020). Developing a learning-centred framework for feedback literacy. Assessment & Evaluation in Higher Education, 45(4), 527-540. https://doi.org/10.1080/02602938.2019.1667955

Morgan, D. L., & Nica, A. (2020). Iterative Thematic Inquiry: A New Method for Analyzing Qualitative Data. International Journal of Qualitative Methods, 19, 160940692095511. https://doi.org/10.1177/1609406920955118

Nordentoft, H. M., Hvass, H., Mariager-Anderson, K., Bengtsen, S. S., Smedegaard, A., & Warrer, S. D. (2019). Kollektiv Akademisk Vejledning. Fra forskning til praksis (Collective Academic Supervision - From Research to Practice). Aarhus Universitetsforlag.

Nordentoft, H. M., Jensen, T. W., & Bengtsen, S. S. (2020). ”Vi er rigtig meget ens”: Peer-dynamik i samarbejdet mellem specialeskrivende par (”We Are Very Much Alike”: Peer Dynamics in the Collaboration Between Thesis-Writing Pairs). Dansk Universitetspædagogisk Tidsskrift, 16(28).

Nordentoft, H. M., & Møller, K. L. (2020). ”Vi ved godt, at det bare er på ’note-plan´” - Studerendes digitale læringsstrategier i peer feedback via Screencast. (“We Know It’s Just at the ‘Note Stage’”: Students’ Digital Learning Strategies in Peer Feedback via Screencast)Tidsskriftet Læring og Medier (LOM), 13(23). https://doi.org/10.7146/lom.v13i23.122012

Nordentoft, H. M., & Møller, K. L. (2022). Emotionelt arbejde og læring i asynkron peer-feedback.( Emotion Work and Learning in Asynchronous Peer Feedback) Dansk Universitetspædagogisk Tidsskrift, 17(33), 97-115. https://doi.org/10.7146/dut.v17i33.129425

Nordentoft, H. M., Thomsen, R., & Wichmann-Hansen, G. (2013). Collective academic supervision: a model for participation and learning in higher education. Higher Education, 65(5), 581-593.

O’Donovan, B. (2017). How student beliefs about knowledge and knowing influence their satisfaction with assessment and feedback [journal article]. Higher Education, 74(4), 617-633. https://doi.org/10.1007/s10734-016-0068-y

Phillips, L. J. (2011). The Promise of Dialogue. The dialogic turn in the production and communication of knowledge. John Benjamins Publishing Company.

Stöhr, C., Ou, A. W., & Malmström, H. (2024). Perceptions and usage of AI chatbots among students in higher education across genders, academic levels and fields of study. Computers and Education: Artificial Intelligence, 7, 100259., 7, 100259.

White, J., Fu, Q., Hays, S., Sandborn, M., Olea, C., Gilbert, H., Elnashar, A., Spencer-Smith, J., & Schmidt, D. C. (2023). A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT. arXiv.org. https://doi.org/10.48550/arxiv.2302.11382